WORKSHOP

MoScoW Workshop - Snippet

Product pages had low review coverage, which limited both customer confidence and merchandising insight. I led a cross functional initiative to introduce loyalty based review incentives that increased participation while preserving credibility.

The program increased daily review volume by about 300 percent, reaching roughly 1,500 reviews per day.

Review volume was lower than expected across PDPs. Marketing and UX partnered to explore whether loyalty points could increase participation without turning the reviews section into a points farm.

The challenge wasn't technically complex. It was strategically complex. How do you incentivize behavior without distorting it? That question shaped every decision from scope to launch.

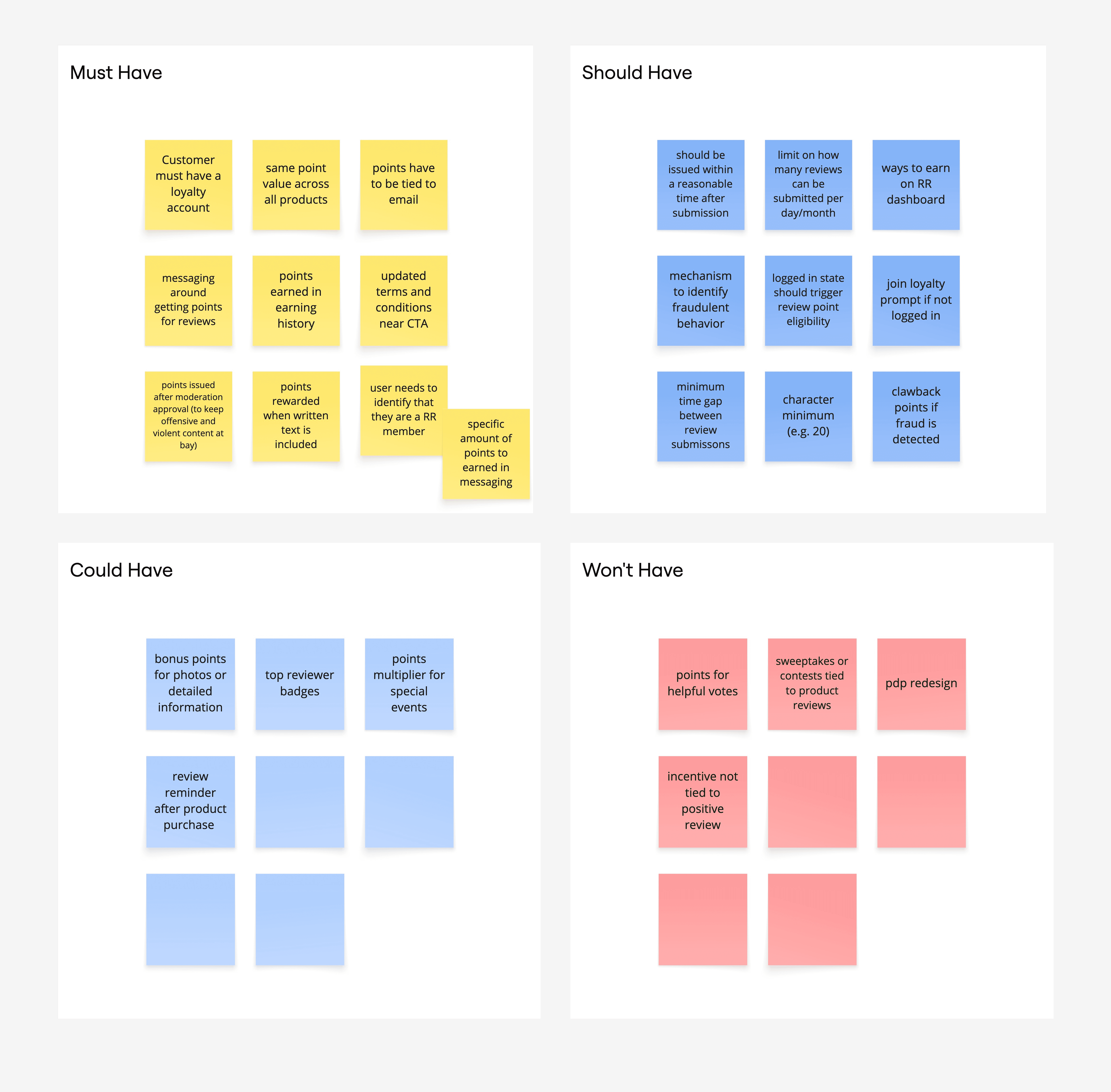

I partnered with another strategist to run a cross-functional workshop using MoSCoW prioritization and additional working sessions, bringing marketing, engineering, legal, product, and UX into the same room before anyone wrote a spec.

The core tension was real: introduce an incentive strong enough to change behavior, but not so transactional that it undermined the authenticity customers rely on when reading reviews.

PDPs lacked review coverage. Merchandising teams had limited qualitative signal. Customers had less confidence buying without peer input. And the business had no low-friction way to change that.

Awarded 50 loyalty points per approved review through the existing program. Integrated the earn into current review flows. Matched reviews to loyalty accounts via submitted email. Positioned points as appreciation, not payment.